At Menlo, we’ve had a thesis searching for where agents will run for quite some time now.

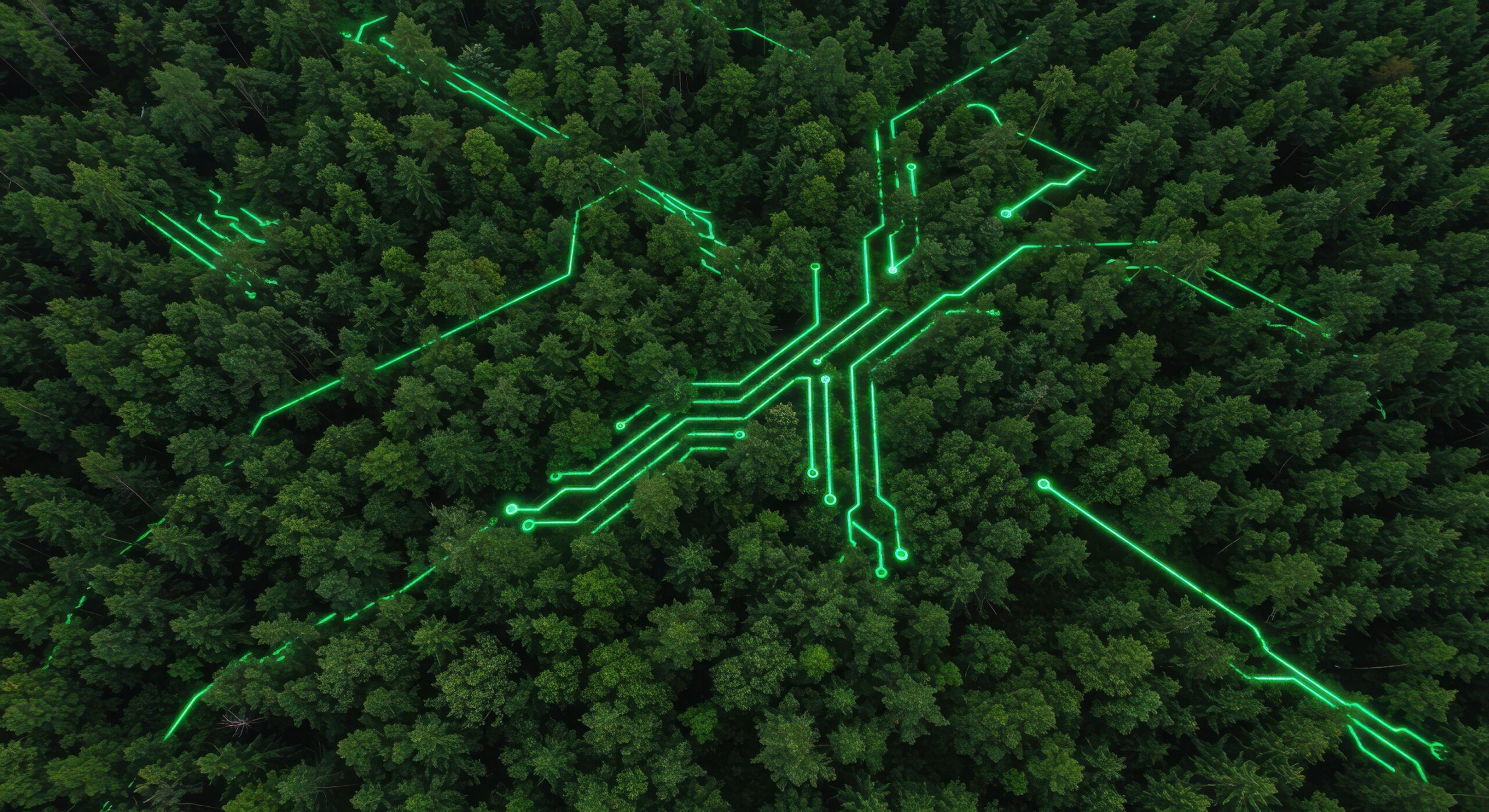

It started with the observation: The shift from simple RAG pipelines to complex, multi-step agent architectures was creating a new class of infrastructure demand for a new compute substrate.

A single agentic task might chain together dozens of model calls, retrieval steps, and tool invocations across non-linear branching logic. Each stage requires different hardware: Prefill is compute-bound; decode is memory-bound; and tool calls are network-bound.

No single chip can handle all three efficiently. Instead, the answer is heterogeneous. GPUs remain the workhorse for compute-intensive batch inference. SRAM-focused accelerators like Groq, Cerebras, and d-Matrix are delivering dramatic speed gains for latency-sensitive workloads. CPUs are surging on the back of orchestration and tool use. And older GPU generations are being redeployed rather than retired. The multi-silicon fleet is ready—it’s just missing the software layer to make it work.

That’s exactly what Gimlet Labs solves. The company is building the first multi-silicon inference and compute cloud, deploying traditional GPUs alongside SRAM-centric silicon. Today, we’re excited to announce that Menlo Ventures is leading the company’s Series A.

Building the Future of Inference

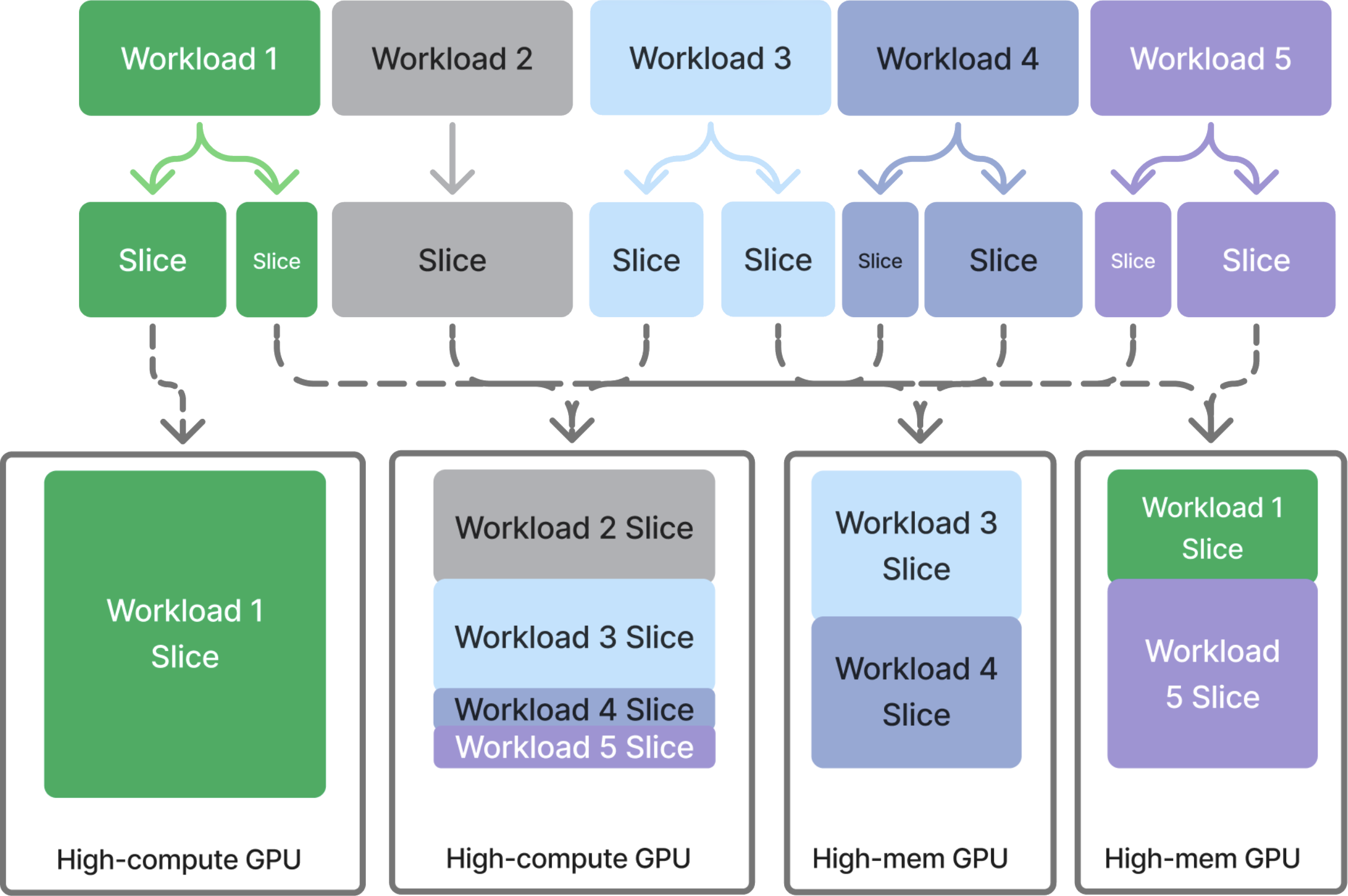

The premise behind Gimlet is straightforward: AI inference can become dramatically more efficient by decoupling AI workloads from specific hardware, decomposing them into constituent stages, and routing each to optimal compute.

Gimlet works by breaking workloads across multi-generation and multi-vendor systems, allowing inference to inherit the performance and cost characteristics of the best possible hardware for each task, rather than an average across mismatched workloads.

This enables Gimlet to integrate and exploit the step-function performance gains and structural economic advantages of emerging accelerators and CPUs, or make use of capacity that would otherwise sit idle.

In this way, Gimlet is powering a new default of heterogeneous compute. Top frontier labs, for example, are increasingly pairing SRAM-centric accelerators with traditional GPUs to push inference speed—and relying on Gimlet to act as the orchestrating software layer across the mixed hardware fleet.

Critically, this doesn’t require developers to rewrite their workloads. Gimlet meets developers where they already are, enabling you to import your existing PyTorch or HuggingFace pipeline. Gimlet handles the rest.

A Second-Time Founding Team

I’ve known Zain Asgar since my days at Splunk and watched him build his previous startup Pixie Labs into one of the most technically impressive observability startups of its era, eventually exiting to New Relic in 2020. Zain is the kind of deeply technical founder who earns conviction fast.

With Gimlet, he’s building alongside co-founders Michelle Nguyen, who architected Pixie’s core data plane and developer experience; Omid Azizi, the technical architect behind Pixie’s data collector and kernel instrumentation system; Natalie Serrino, a founding engineer at both Pixie and Observe; and James Bartlett, a founding engineer at Pixie. This team built hard distributed systems together, shipped them to enterprise customers, and is choosing to do it again tackling an even more ambitious problem. That continuity is rare for such a talent-dense team, and when we saw that in Gimlet we leaned in immediately.

The Next Phase of AI Infrastructure

Our partnership with Gimlet is the latest expression of a thesis we’ve been building for years. We’ve invested across the AI stack—from foundation models like Anthropic, to databases like Neon (acquired by Databricks) and Pinecone, to data infrastructure like OpenRouter and Unstructured. The through line is our conviction that the AI infrastructure layer is being rebuilt to support the new workloads being created by agents.

Gimlet sits squarely at the core of that thesis. Zain, Michelle, Omid, and Natalie have deep technical credibility to tackle the foundation of this new stack. We couldn’t be prouder to be in their corner as they build the multi-silicon infrastructure layer that agentic AI needs.

Tim is a partner at Menlo Ventures focused on early-stage investments that speak to his passion for AI/ML, the new data stack, and the next-generation cloud. His background as a technology builder, buyer, and seller informs his investments in companies like Pinecone, Neon (acquired by Databricks), Edge Delta, JuliaHub, TruEra…

As a principal at Menlo Ventures, Derek focuses on early-stage investments across AI, cloud infrastructure, and digital health. He partners with companies from seed through inflection, including Anthropic, Eve, Neon, and Unstructured. Derek joined Menlo from Bain & Company, where he advised technology investors on opportunities ranging from machine learning…