I recently sat down with the founding team at Inception—Stefano Ermon, Aditya Grover, and Volodymyr Kuleshov—to hear the story behind one of AI’s most compelling contrarian bets. Our conversation covered the decade-long collaboration that preceded the company, the technical breakthrough that made it possible, and what it actually took to walk away from tenure to build it. Watch the full video below, and read on for the highlights.

Three Scientists, One Stanford Lab

Stefano grew up in a small village in the Italian Alps. Volo was born in Ukraine and raised in Montreal. Aditya came from India. Three different paths, one shared obsession. They found each other at Stanford—a professor and his students—and spent nearly a decade building trust, trading ideas, and quietly working on a problem most of the AI field had written off as unsolvable.

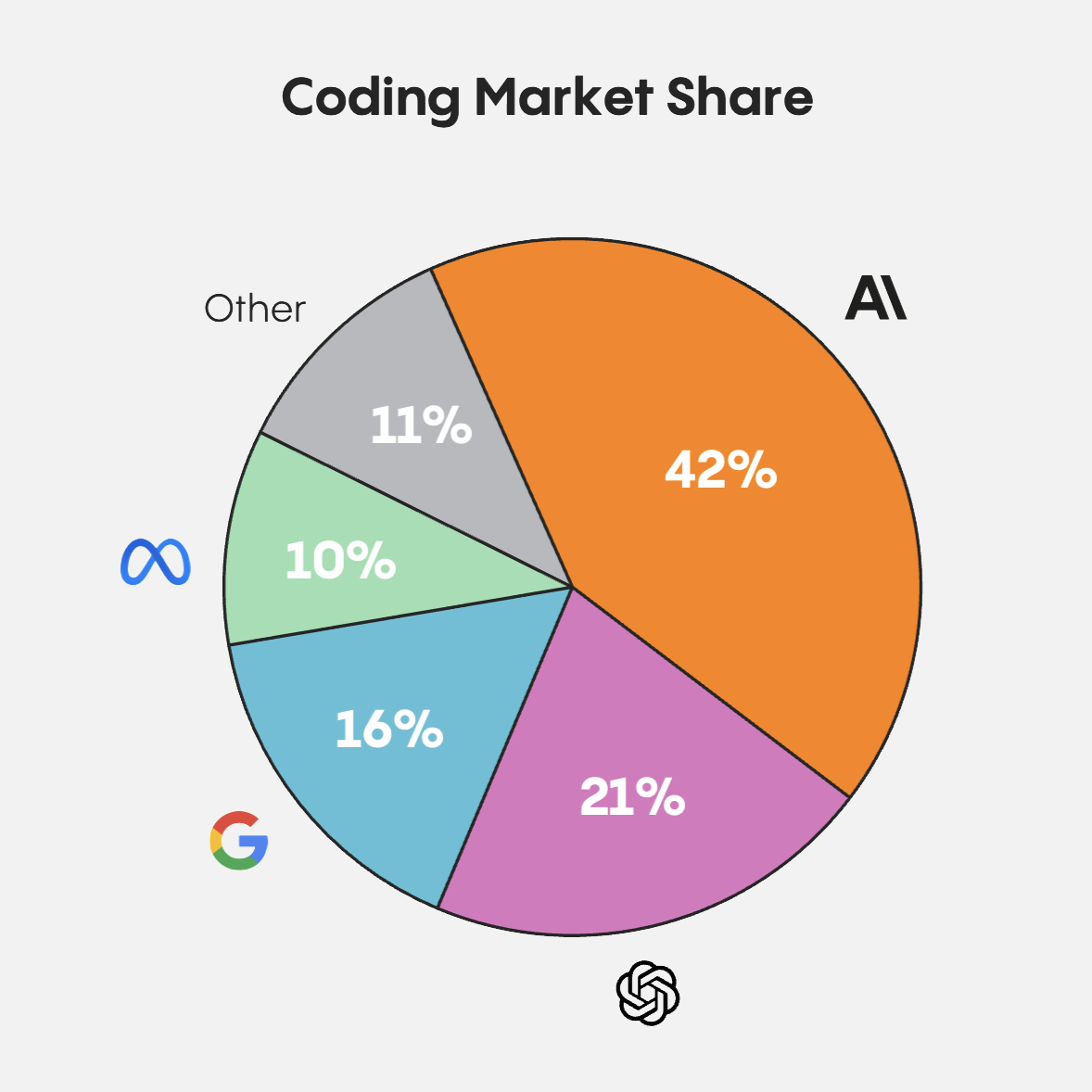

The Contrarian Bet

We set out to ask a simple question: What if diffusion—already dominant for images and video—could work for language, too? The field’s consensus was that it couldn’t. Then Stefano’s lab published a result that changed the conversation: For the first time, a diffusion model matched the quality of autoregressive models on text generation and ran 10x faster. It was a signal that the entire architecture of modern AI might be ready for a rethink.

Walking Away from Tenure

All three had tenure-track positions at world-class universities—Stefano at Stanford, Aditya at UCLA, Volo at Cornell. But the technology had outgrown what any academic lab could do with it. Scaling diffusion language models to production required compute, capital, and engineering capacity that simply didn’t exist inside a university. The only way to find out if this bet was right was to start a company.

What They’re Building

Inception’s Mercury-2 is the world’s first—and fastest—large-scale reasoning model built on diffusion, hitting over 1,000 tokens per second on standard GPUs. Volo draws a direct parallel to the transformer itself: Just as transformers replaced RNNs by making training fully parallel and unlocked the pre-training scaling laws behind GPT, diffusion replaces autoregressive decoding by making inference fully parallel. The team believes all LLMs will eventually be built on diffusion—the kind of claim you make when you’ve spent a decade working on a problem nobody else thought was worth solving, and then solved it.

Menlo Ventures led Inception’s latest round in November 2025. Learn more at inceptionlabs.ai.

Tim is a partner at Menlo Ventures focused on early-stage investments that speak to his passion for AI/ML, the new data stack, and the next-generation cloud. His background as a technology builder, buyer, and seller informs his investments in companies like Pinecone, Neon (acquired by Databricks), Edge Delta, JuliaHub, TruEra…